Intelligent Autonomous Systems

Intelligent Autonomous Systems

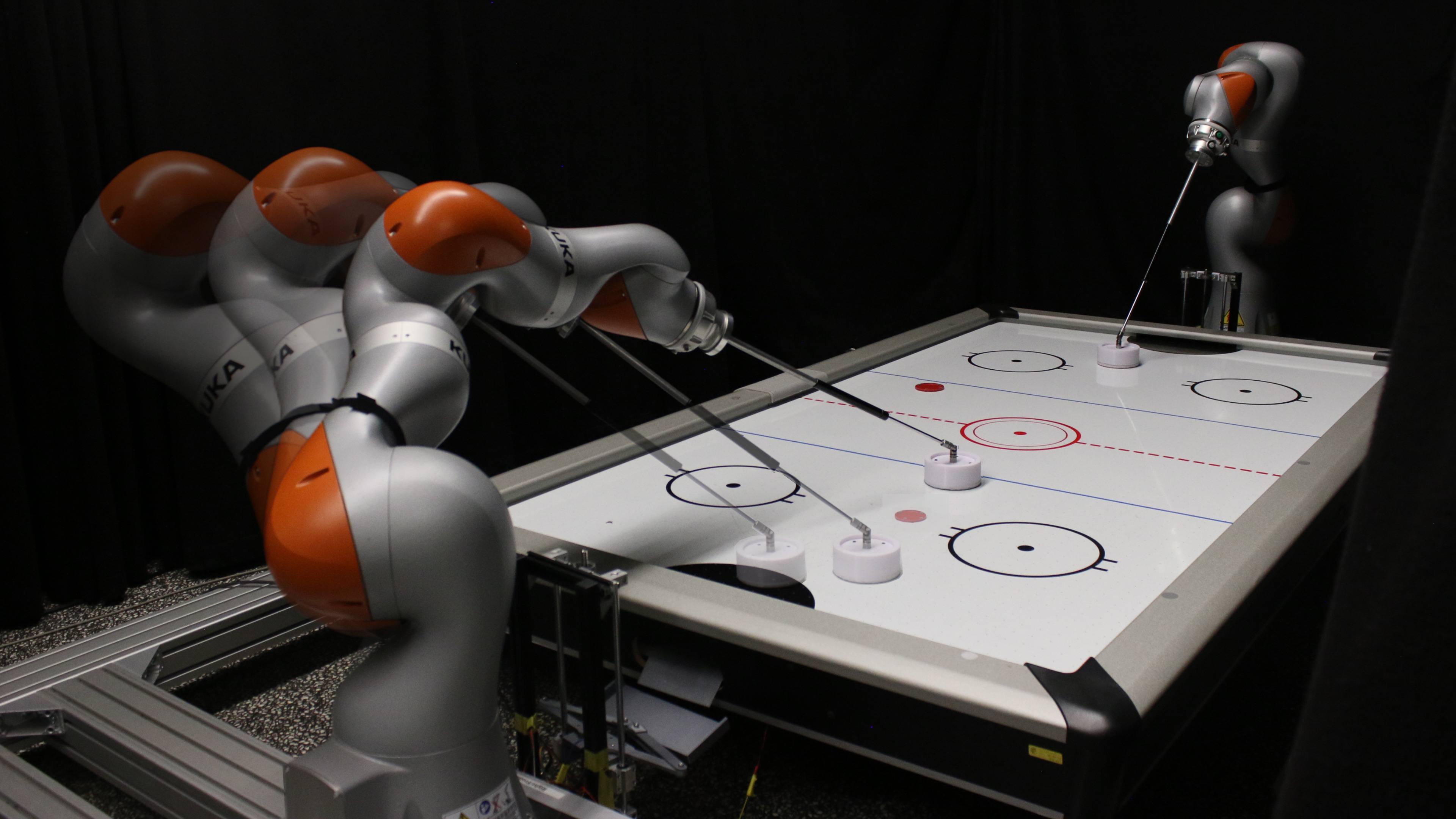

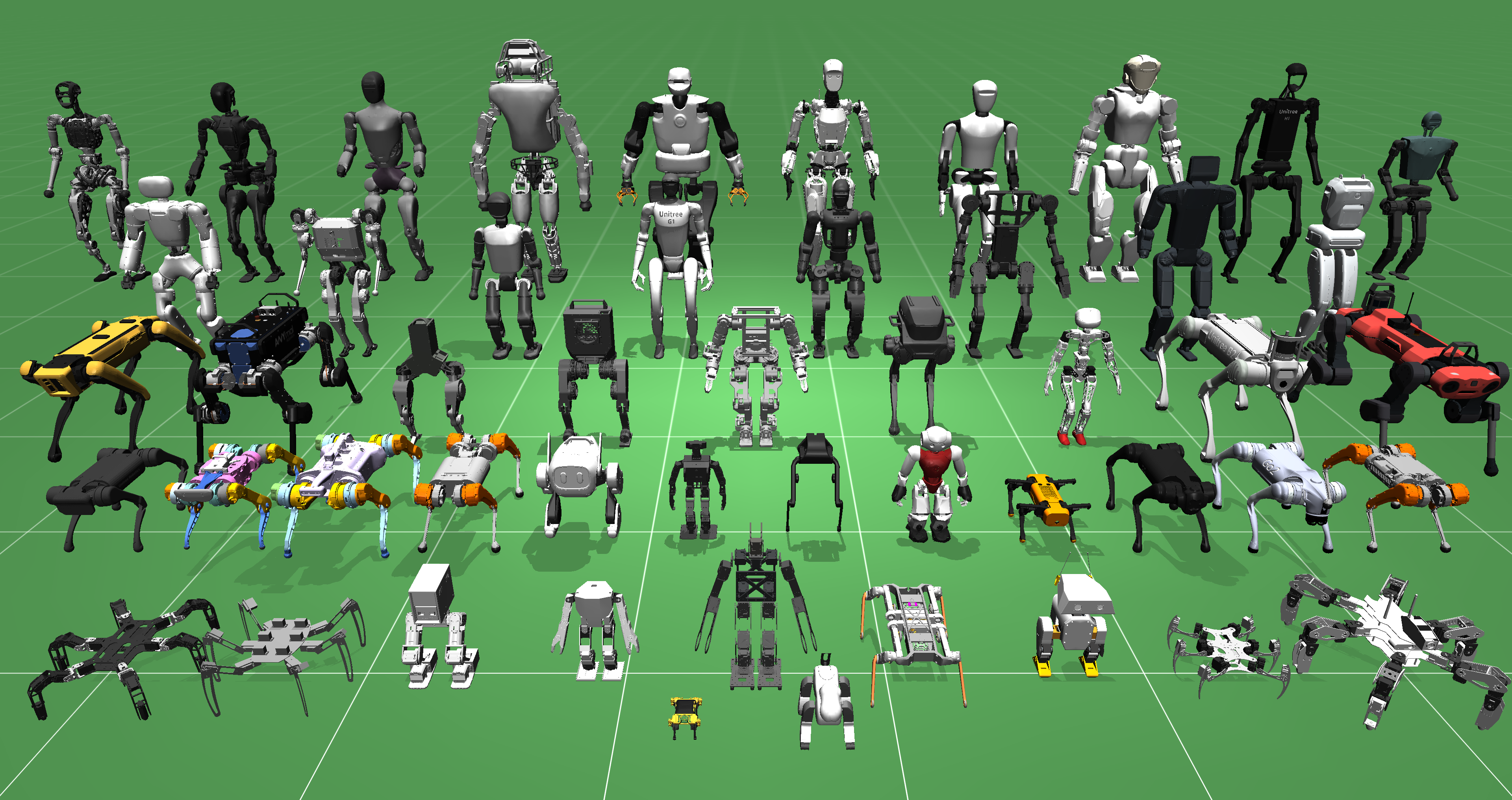

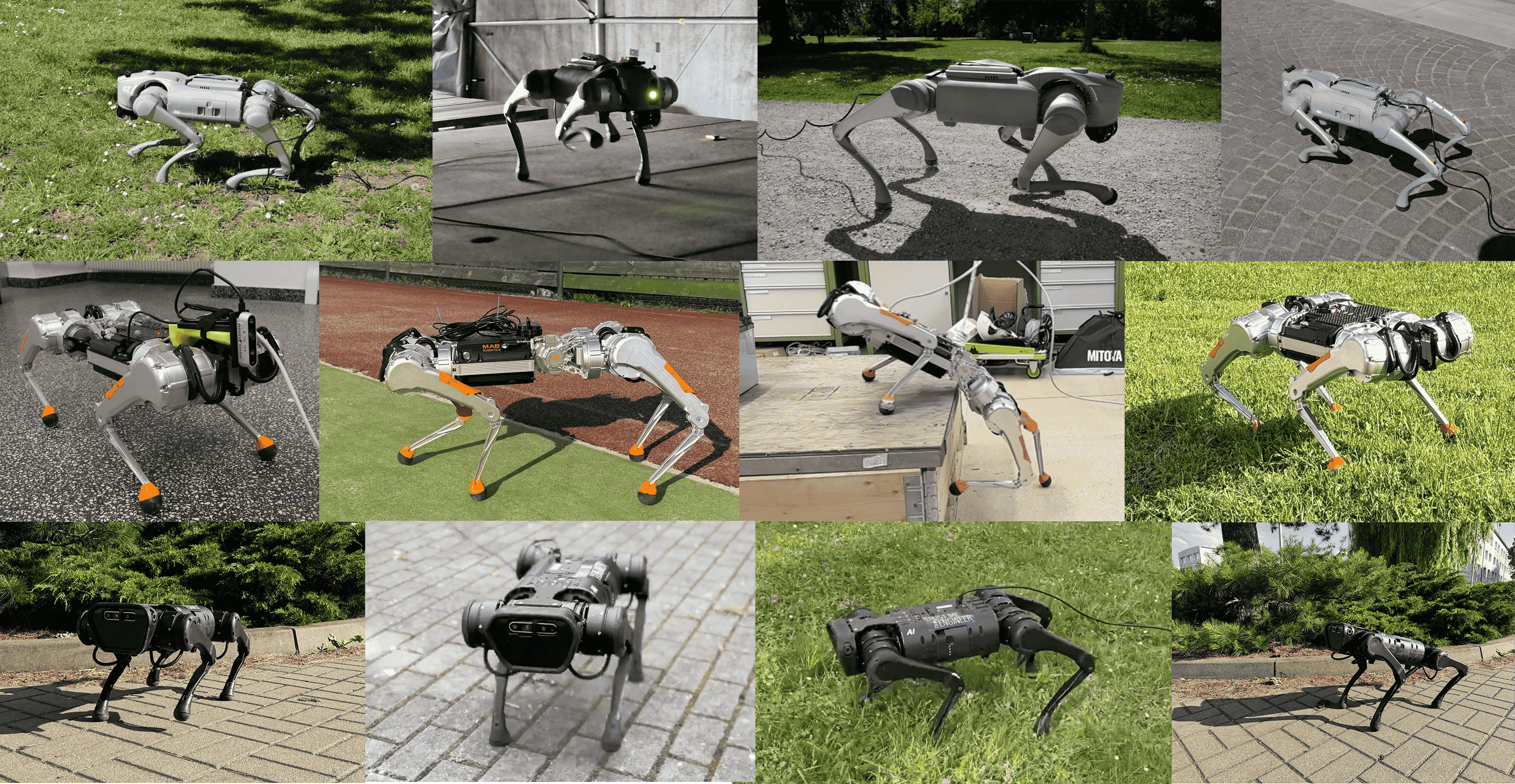

Welcome to the Intelligent Autonomous Systems Group of the Computer Science Department of the Technische Universitaet Darmstadt. Our research centers around the goal of bringing advanced motor skills to robotics using techniques from machine learning and control. Please check out our research or contact our lab members. Creating autonomous robots that can learn to assist humans in situations of daily life is a fascinating challenge for machine learning.

Upcoming Talks

| Date | Time | Location |

| 5.06.2026 | 15:00-15:30 | E202 |

| Keagan Holmes, Intermediate M.Sc. Thesis Defense | ||

| Date | Time | Location |

| 12.06.2026 | 14:00-14:40 | E202+http://talks.robot-learning.net |

| Arsenii Mustafin (Aalto University), Invited Talk: Geometric View of MDPs and What It Gives Us | ||

| Date | Time | Location |

| 19.06.2026 | 15:00-15:30 | E202+http://talks.robot-learning.net |

| Ahmad Hamad, B.Sc. Thesis Defense | ||

While this aim has been a long-standing vision of artificial intelligence and the cognitive sciences, we have yet to achieve the first step of creating robots that can learn to accomplish many different tasks triggered by environmental context or higher-level instruction. The goal of our robot learning laboratory is the realization of a general approach to motor skill learning, to get closer towards human-like performance in robotics. We focus on the solution of fundamental problems in robotics while developing machine-learning methods. Artificial agents that autonomously learn new skills from interaction with the environment, humans or other agents will have a great impact in many areas of everyday life, for example, autonomous robots for helping in the household, care of the elderly or the disposal of dangerous goods.

An autonomously learning agent has to acquire a rich set of different behaviours to achieve a variety of goals. The agent has to learn autonomously how to explore its environment and determine which are the important features that need to be considered for making a decision. It has to identify relevant behaviours and needs to determine when to learn new behaviours. Furthermore, it needs to learn what are relevant goals and how to re-use behaviours in order to achieve new goals. In order to achieve these objectives, our research concentrates on hierarchical learning and structured learning of robot control policies, information-theoretic methods for policy search, imitation learning and autonomous exploration, learning forward models for long-term predictions, autonomous cooperative systems and biological aspects of autonomous learning systems. In the Intelligent Autonomous Systems Institute at TU Darmstadt is headed by Jan Peters, we develop methods for learning models and control policy in real time, see e.g., learning models for control and learning operational space control. We are particularly interested in reinforcement learning where we try push the state-of-the-art further on and received a tremendous support by the RL community. Much of our research relies upon learning motor primitives that can be used to learn both elementary tasks as well as complex applications such as grasping or sports. In addition, there are research groups by Carlo d'Eramo, Dorothea Koert and Davide Tateo at our institute that also focus on these aspects. In case that you are searching for our address or for directions on how to get to our lab, look at our contact information. We always have thesis opportunities for enthusiastic and driven Masters/Bachelors students (please contact Jan Peters). Check out the open topics currently offered theses (Abschlussarbeiten) or suggest one yourself, drop us a line by email or simply drop by! We also occasionally have open Ph.D. or Post-Doc positions, see OpenPositions.

You can follow us on Twitter (ias_tudarmstadt), Blue Sky (@ias-tudarmstadt.bsky.social) or LinkedIn ... or just read the news here.

The Intelligent Autonomous Systems (IAS) group at TU Darmstadt is an extraordinary assembly of visionary researchers, engineers, and thought leaders dedicated to advancing the field of robotics and artificial intelligence. Driven by a shared passion for innovation, this dynamic team excels at bridging the gap between theory and real-world applications, pushing the boundaries of what is possible in autonomous systems. At the heart of the IAS group is a commitment to interdisciplinary collaboration, combining expertise in machine learning, computer vision, control theory, human-robot interaction, and robotics. The team is united by its pursuit of understanding and creating intelligent machines that can operate autonomously in complex and unstructured environments. Their work spans a diverse range of applications, from robotic manipulation and autonomous driving to aerial robotics and human-centered AI systems. The IAS group is renowned for its groundbreaking research in reinforcement learning, where they develop algorithms that enable robots to learn from their environments and improve their performance over time. Their work in deep learning and neural networks is pioneering new ways for robots to perceive and interpret the world, making strides in object recognition, semantic understanding, and motion planning. In addition, their research into multi-robot systems and swarm robotics is setting new standards for cooperation and coordination among autonomous agents, enabling them to perform complex tasks together seamlessly. What truly sets the IAS team apart is their relentless curiosity and drive to tackle the most challenging problems in the field. Their research is characterized by a strong emphasis on experimental validation and real-world applicability, ensuring that their innovations do not remain confined to academic papers but find tangible applications in industries ranging from manufacturing and healthcare to environmental monitoring and space exploration. The group’s collaborative ethos extends beyond their immediate team, as they actively engage with an international network of academic and industrial partners. They are passionate about nurturing the next generation of innovators, providing an inspiring environment for students and young researchers to thrive, learn, and contribute to the cutting edge of autonomous systems research. Under the visionary leadership of distinguished professors and supported by talented postdoctoral researchers, Ph.D. candidates, and graduate students, the IAS group at TU Darmstadt continues to redefine the frontiers of robotics and AI. With a steadfast dedication to excellence, innovation, and impact, the IAS team is not just shaping the future of intelligent autonomous systems but also leading the charge in creating a smarter, more connected world.